The Fermi Paradox poses a haunting question: if intelligent life is common in the universe, why haven’t we encountered it? Among the many proposed answers—ranging from interstellar distances to catastrophic self-destruction—there’s a lesser-explored possibility rooted in the technological and ethical choices of advanced civilizations.

Imagine a civilization capable of building fully autonomous robots that could mine materials, harvest energy, and construct new robots. With enough sophistication, these machines could replicate indefinitely, spreading across star systems to explore, terraform, or build vast structures. Given sufficient resources and time, such a self-sustaining robotic network could colonize the galaxy.

However, this very capability might be the reason such civilizations choose not to act.

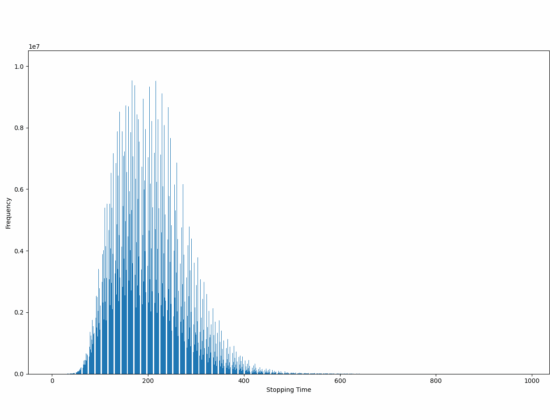

The dangers of uncontrolled replication are immense. Self-replicating robots, once released, could spiral beyond the control of their creators. Even well-designed safety protocols might fail over cosmic timescales. A tiny mutation in their design or programming could turn them from helpful explorers into unbounded consumers of resources—a scenario akin to the “grey goo” problem feared in nanotechnology.

For a responsible civilization, the risks might far outweigh the benefits. Sending self-replicating robots into the galaxy is not merely a technological choice—it’s a moral gamble. The potential to inadvertently harm other life forms, disrupt planetary ecosystems, or even destroy other civilizations might make the idea ethically untenable.

In this view, the silence we observe is not because advanced civilizations can’t spread, but because they won’t. They may see the dangers of infinite replication as too high a price for exploration. This self-restraint would leave the galaxy looking empty, even if intelligent life is out there.

Perhaps the Fermi Paradox is not a mystery of absence, but a testament to the wisdom of restraint.